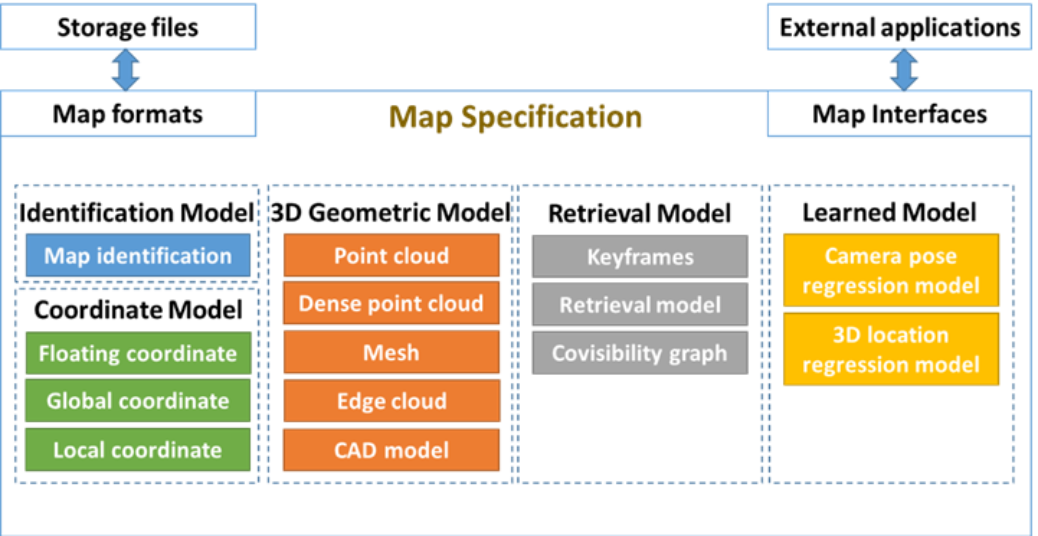

An accurate Digital Twin/BIM model, i.e., the ARTwin map with additional information as instance segmentation, is necessary for robust localization of AR devices, precise 3D registration of the virtual content with the physical environment, measuring and analyzing desired properties. An example of a use case consists of planning a new production line or analyzing the construction mistakes before any damage and financial losses. We assume two kinds of mapping services: the sparse one and the dense one. Sparse mapping is realized by either a SLAM or a SfM method and results in sparse point clouds constructed from

the triangulation of keypoints detected in images, camera poses, timestamps, and other localization information. The dense point cloud is constructed by Multiview Stereo (MVS) method that we may enrich with the device’s information from a depth camera. The goal of dense mapping service is the calculation of a dense point cloud for future processing as meshing, texturing, or semantic segmentation. The mapping services, i.e., sparse and dense mapping, provide information for extending and updating the current digital copy of the physical environment.

To better recognize 2D objects, we developed a pipeline for generation of the training and test images in Blender, myGym framework, AI Habitat simulation, Neural Rerendering, and NeRF based IBRNet. The experimental evaluation of the 2D segmentation showed that we could adapt the segmentation to our use case environment and highlighted the actual challenges and requirements on a 3D model.